Workflow Orchestration 1 - What is a workflow?

Introduction

It is hard to describe what a Workflow Platform is. It is both familiar and exotic. There are aspects of the problem space we all know well: Retries, eventual consistency, message processing semantics, visibility, heartbeating, and distributed processing to name a few. Yet, when they’re all put together in a pretty package with a bow tied on top, it becomes something almost magical. It feels like seeing the iPhone for the first time: Of course you want a touch screen on a cellphone and mobile internet access. Similarly, a workflow platform feels like the only natural way to solve problems. Once you learn it, anything else feels as clunky as using a feature phone.

Why learn about workflows?

I believe thinking in terms of workflows is as important as learning about Design Patterns. While dated, Design Pattern gave us a language with which to communicate ideas. In a similar way, learning Workflows allow us to recognize patterns and relevant solutions for building robust systems with low maintenance cost.

Instead of defining what a workflow platform is, I will give two examples of workflow platforms. This is mainly because I tried creating such a definition and failed miserably. Workflow platform feels like the platypus of the software pattern world – It doesn’t fit neatly into any one category.

The platypus problem

The platypus problem is when you need to categorize something that stubbornly exists on the murky borders between categories, mocking our intuition that things can be organized into a well-defined taxonomy. The platypus dares to have a beak, thus ruining the statement “mammals don’t have beaks.” The platypus dares to produce milk while laying eggs, thus ruining the statement “mammals don’t lay eggs.” Oh and it doesn’t have teats. Because, you know, why would a mammal have nipples?

Whenever you are having trouble explaining something to someone where the other person keeps asking, “Are talking about A or B?” Just bring up the word platypus. They will immediately understand what you are talking about and stop pestering you with further questions.

A brief history of Apache Airflow

In 2014, data engineers at Airbnb set themselves to tackle the increasingly tedious problem of data pipelines. Data pipelines often requires coordinating jobs across many systems and must be completed in a timely fashion to support rapid experimentation and data analysis. They decided to build Airflow, a batch-oriented workflow system. It has a wealth of plugins for data sources and an intuitive UI. Airflow was incredibly successful and became an Apache project in 2016. The rest, as they say, is history.

In contrast to a simple sequential script, Airflow requires dependencies between processing steps to be explicitly described. This extra effort allows the platform to scale horizontally by distributing computing across servers. This also allows successful computation to be stored, avoiding expensive reprocessing of data. Finally, ordering between steps can be automatically evaluated, allowing independent steps to be parallelized automagically and dependent steps to immediately execute when their predecessors complete.

An example workflow from Data Pipelines with Apache Airflow.

In the above graph, Airflow will automatically determine that the fetch and clean steps can be executed in

parallel. It will also retry failed steps according to your configuration.

In my opinion, Airflow’s most important strength is its intuitive UI. Its graphical and drill-down capable UI was a delight to many data scientists. Airflow allowed them to focus their energy on developing machine learning algorithms instead of debugging distributed systems.

Of all the workflow orchestration platforms, Airflow has gained the most mind share.

Airflow exhibits three features of workflow platforms:

- UI for managing and debugging workflows - A web-based UI that makes it easy for users to understand the status and progress of workflows.

- Distributed processing - Airflow allow computation to be distributed across multiple nodes.

- Fault tolerance - Airflow comes with built-in abstractions around retries, applying best practices such as exponential backoff.

A different approach

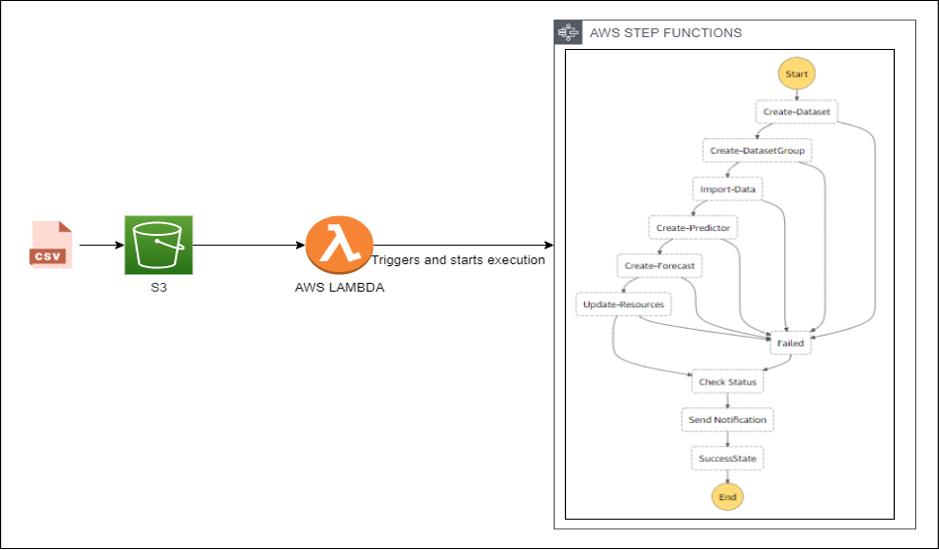

Announced at AWS re:Invent 2016, Step Functions was sold as a way to, "[Coordinate] the components of your application as a series of steps in a visual workflow." (original blog post).

Similar to Airflow, Step Functions supports retries, distributed processing, and a friendly UI for troubleshooting your workflows.

I was told this is easier to maintain than code.

The main difference between Step Functions and Airflow is that Airflow was designed around data ETLs and the Step Functions is meant to be a general purpose workflow platform. As a consequence, they make very different trade-offs.

- Airflow’s workflow progresses according to a scheduler. This means a larger latency between each step of the progression. This is typically not a problem since the time to process data dominates.

- Step Functions can minimize latency between steps, supporting workflows that need to complete quickly.

- Airflow comes with a number of built-in plugins for data ingestion.

- Step Functions comes with integration with other AWS services.

- Airflow’s operating model supports concepts unique to data processing, such as backfilling and incremental data processing.

- Step Functions supports a synchronous invocation model, which can be useful for microservice orchestration.

Step Functions demonstrates another important use case for workflows – microservice orchestration.

Orchestrating hotel and airline bookings

Imagine you have a booking service that books a hotel and a flight as a combined package. How would you do that? Further assume the underlying hotel and flight booking services belong to different third parties. Your user should only be billed for the booking if both reservations succeed, yet you have little control over either system. One algorithm for solving this problem is two-phase commit (2PC).

In two-phase commit, a Coordinator talks to multiple nodes to write data. Then the Coordinator executes the 1st phase of the commit, where it consult with each node that data has been written correctly. Then it executes the 2nd phase, where it signals each node to commit the data. Furthermore, the Coordinator durably records its intent (decision) to validate (phase 1) and commit (phase 2) before it does so. This journaling behavior is crucial because the coordinator itself may crash and the journal allows the Coordinator to resume 2PC correctly.

While one can use Airflow to implement 2PC, it will be inefficient because each step will need to wait for the scheduler to run again before progressing. In this case, a general purpose workflow engine like Step Functions can complete quicker. In an ideal situation where there are no errors and the nodes are snappy, the system will appear responsive. In the inevitable situation where the airline or hotel nodes are sluggish or times out, the UI can query the workflow for progress and indicate a failure after a predetermined overall timeout.

A personal opinion on Step Functions

Like many AWS services, there’s a developer-experience vs infrastructure-as-a-service trade-off. Personally, I’ve had poor experience maintaining and deploying Step Functions State Machines, the details of which can be an article for another day. However you don’t need a Ops team to maintain the Step Functions infrastructure. That’s often a deciding factor for small companies.

Intermission

Please don’t use the plural form for your product name. Seriously, why would you do this? It forces awkward grammar where one uses the singular verb for a seemingly plural noun.

John: “Apples is delicious.”

Daughter: “Dad, you mean apples are delicious.”

John: “No. I meant Apples is delicious. AWS Apples is a cloud based fruit that is guaranteed to be delicious as long as you don’t exceed the tastes per account quota.”

Daughter: (◔_◔)

Why should I care?

In the second part of this series, I’ll give some hypothetical scenarios where a workflow platform makes sense. It turns out, the use case for workflows are more common then you (might) think.

Lastly, the third part of this series will feature Temporal, a general purpose workflow platform similar to AWS Step Functions. Temporal was created by the original leads of Uber Engineering’s Cadence framework and Microsoft’s Azure Service Bus I would highly recommend looking at Temporal if you are looking for a high performance and productive workflow platform.