AI and Software Development 2026

AI And Software Development

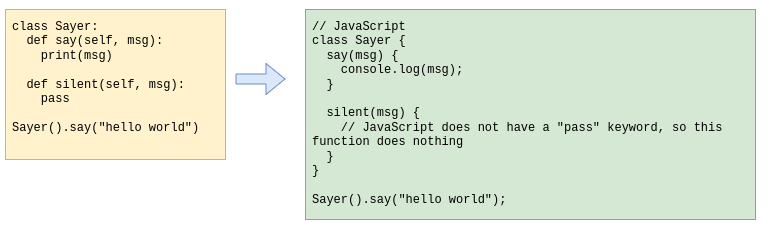

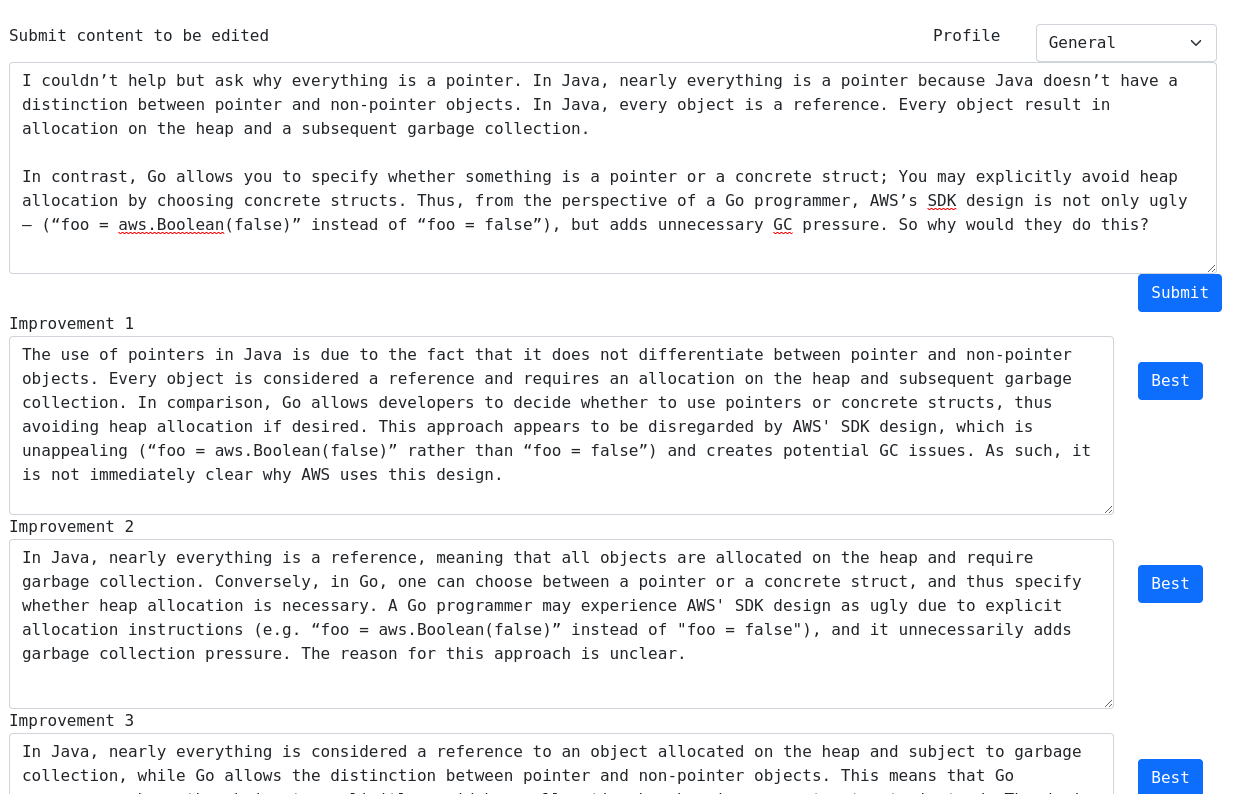

When ChatGPT was released in Nov of 2022, it was already a better programmer than me. By December, I used it consistently whenever I wrote code. In 2023, I relied on ChatGPT to create an online puzzle game for my kids. At this time, my coding was mostly architecture decisions, code reviews, refactoring, and testing. I was still writing some of the code, as it was faster to make small changes by hand instead of copying the right context into ChatGPT. By early 2025, I started to use Cursor, one of the first coding agents. At this point, I stopped writing code completely. Coding agents solved the context problems of Chatbots, as the agent had complete access to your file system and code base, and can autonomously explore your computer to populate its own context. By that point, my involvement was purely architectural, requirement definition, and testing. I only review AI-written code insofar as to understand the decisions it made, not to identify bugs or offer improvements.